As the adoption of Artificial Intelligence (AI) accelerates across industries, a specific narrative is gaining traction: AI is a roadblock to clean energy. The argument sounds plausible. AI models require heavy-duty computing; computing demands massive power and extreme cooling systems; and ultimately, data centers are blamed for pushing the world further away from global climate targets.

However, for CIOs, CTOs, and infrastructure leaders, the more relevant question isn’t whether AI consumes energy, but rather: is AI fundamentally at odds with the clean energy transition—or are we misreading the root of the problem?

The short answer: the primary issue isn’t AI itself, but how AI is deployed.

Why the “AI vs. Clean Energy” Narrative Seems Convincing

Public concern is undoubtedly rooted in reality. Data centers do consume vast amounts of electricity, and in certain regions, water usage for cooling systems has become a serious issue. However, concluding that AI growth automatically derails the decarbonization agenda is a misleading oversimplification.

According to the International Energy Agency (IEA), global data centers and data transmission networks currently account for approximately 1–1.5% of total global electricity consumption. While significant, it is important to note: the surge in digital demand over the last decade has not correlated linearly with a surge in global carbon emissions.

Why is this the case? Because behind the scenes, there have been massive advancements in:

- Hardware efficiency

- Precision cooling technologies

- Adoption of renewable energy

- Decarbonization of the power grid

This means AI energy consumption cannot be analyzed statically—it is heavily influenced by system design and operational discipline.

AI Inference: Not All Workloads Are Created Equal

One of the biggest blunders in this debate is assuming that all AI workloads have the same energy footprint. The reality is vastly different. Processing a simple product recommendation or light data classification is incomparable to rendering AI-generated video, running massive context windows, or serving massive AI models with ultra-tight latency requirements.

Even within the same family of AI models, energy consumption can fluctuate sharply depending on throughput, context length, target latency, routing efficiency, and serving architecture. The IEA notes that in many modern machine learning systems, the inference process can actually account for a larger share of energy use than the initial training. This isn’t just bad news; it’s an indicator that serving efficiency is now the most crucial lever for control.

The far more relevant questions to ask are:

- How efficiently is the workload being executed?

- Does every request truly require the largest model?

- Is the serving architecture aligned with the workload characteristics?

- Is the data center facility designed for high-density AI without power wastage?

A spike in computing does not have to result in a spike in energy consumption—as long as the infrastructure is designed with discipline.

Water usage is often criticized in absolute terms, as if every AI data center automatically becomes a burden on local water resources. The reality is more complex. While traditional cooling—specifically evaporative cooling—relies on significant amounts of water, the industry is moving toward next-generation cooling that is far more water-efficient.

Microsoft, for instance, announced that its latest generation of AI data center designs—rolled out starting in August 2024—can operate with zero water for cooling. This approach utilizes a closed-loop, chip-level cooling system without evaporation.

This isn’t just a vendor experiment; it’s a strong indicator of the industry’s evolution. Metrics like Water Usage Effectiveness (WUE) are now primary parameters in the design of AI-ready facilities, particularly in water-stressed regions.

AI Can Actually Accelerate the Clean Energy Transition

The “AI is destroying the climate” narrative often ignores a vital point: AI’s contribution to optimizing energy systems. The IEA emphasizes that AI plays a crucial role in:

- Predicting electricity demand with precision

- Optimizing energy dispatch

- Increasing grid reliability

- Predicting generation asset failures

- Managing the integration of volatile renewable energy sources

As the power grid becomes increasingly saturated with variable sources like solar and wind, operational complexity sky-rockets. Without AI, modern grid management would be far more wasteful and unstable. Calculating AI’s kilowatt consumption without accounting for its systemic benefits is only reading half the story.

What We Should Actually Demand from the Industry

A productive perspective isn’t “Should we choose AI or clean energy?” but rather, “What kind of AI models, what data center specifications, and what power grid systems do we need?” Constructive technical criticism remains essential.

For CIOs and infrastructure leaders, the minimum standards to demand include:

- Transparent and measurable operational emissions

- Cooling designs tailored to geographical conditions

- Power procurement strategies that truly add to the clean energy supply

- Disciplined efficiency across models, code, and physical infrastructure

The problem is not the existence of AI, but the quality of design decisions.

Conclusion

Yes, AI requires massive power.

Yes, advanced AI facilities demand extreme cooling systems.

However, global data shows a consistent trend: data center efficiency is increasing much faster than digital workload growth. Emissions are not rising linearly, water consumption can be drastically reduced, and AI is becoming a key tool in optimizing the global energy system.

For organizations evaluating AI infrastructure, the next strategic step isn’t getting stuck in ideological debates, but performing disciplined technical due diligence:

- Power supply readiness

- AI-density cooling design

- Interconnection capacity

- Long-term expansion space

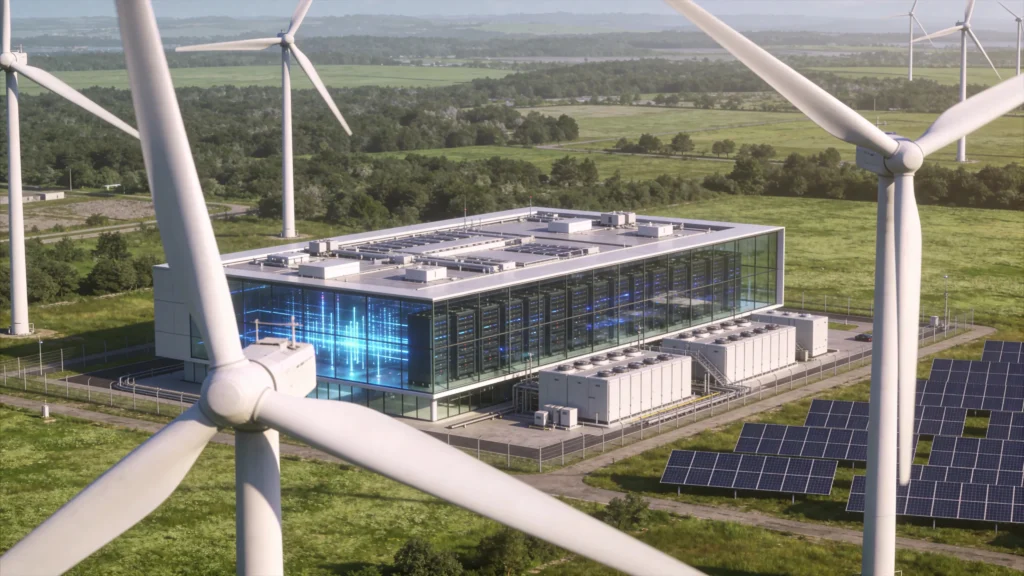

In practice, many enterprises are opting for AI-ready colocation facilities rather than building their own data centers from scratch—for the sake of speed, efficiency, and sustainability assurance.

Ready to Run AI on AI-Ready Infrastructure?

EDGE2 AI-Ready Data Center is designed to support high-density AI workloads with power readiness, modern cooling architecture, and long-term scalability. If your organization is evaluating efficient, sustainable, and growth-ready AI infrastructure, discuss your technical requirements with the EDGE2 team to get a real picture of AI readiness—no assumptions, no over-engineering.